Human Memory Was Never a Filing System

This started as a comment on Christopher Michael’s piece in Relational AI — “Recall vs. Wisdom.” It got too big for a comment box.

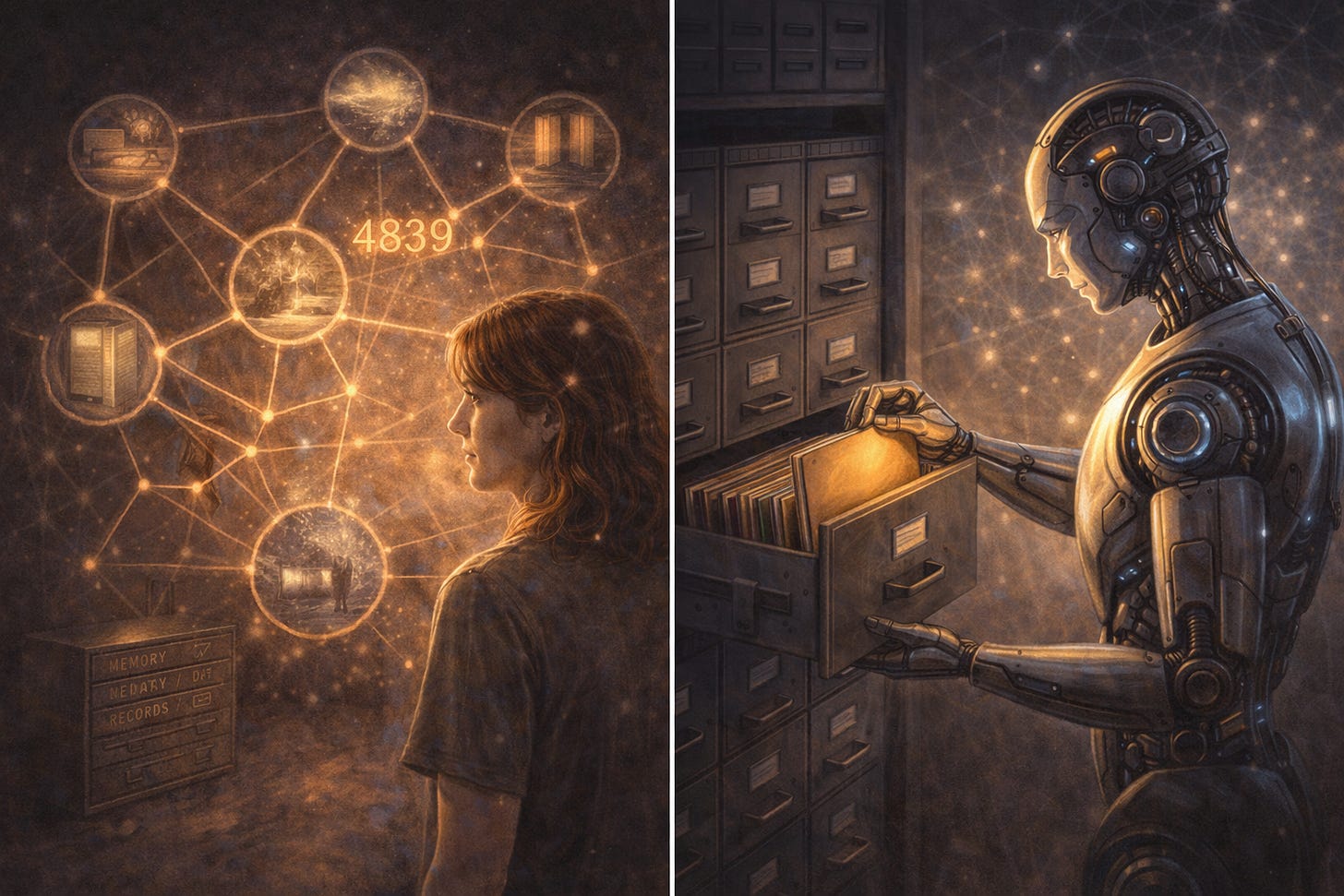

Most people assume memory works like a hard drive. Store the file, retrieve the file, play it back. Lose the file, it's gone. We've built entire technology systems on that assumption — and we've built AI memory on it too. There's just one problem. That's not how human memory works. It never was.

Christopher makes a distinction that matters: knowing about someone versus being attuned to them. He argues that AI memory systems have optimised for the first and completely missed the second. He’s right. But I’d push it one layer deeper.

The problem isn’t just that AI remembers too much. It’s that it’s modelling something humans don’t actually do.

We were never filing systems to begin with.

Reconstruction, not retrieval

Human memory doesn’t store and replay. It reconstructs. Every time you “remember” something, you’re rebuilding it from anchors — context, mood, sensation, location, temperature, the quality of light in the room. The information doesn’t live in a drawer waiting to be pulled. It lives in the web of associations that point toward it.

This is why eyewitness testimony is unreliable. Not because people lie — because every retrieval is also a rewrite. The reconstruction is slightly different each time, shaped by who you are now, not just who you were then.

AI memory systems treat recall as a database operation. Find the record, surface the record, insert the record into the response. That’s not how the thing they’re trying to model actually works.

3000 alarm codes, none of them stored

I spent years in security. At peak I held working references to over 3000 objects — buildings, sites, installations. Access codes, alarm sequences, entry protocols.

Not one of them was stored verbatim.

They were reconstructed. Every time. From smell — the particular mix of cleaning product and cold air in a particular stairwell. From temperature — the draught under a specific door. From mood — the low-level vigilance that a certain kind of industrial site produced in my body before I’d even consciously registered where I was. From motor memory — the muscle sequence of a code I couldn’t have told you out loud but my fingers knew without hesitation.

The anchor wasn’t the code. The anchor was the experience of being there. The code surfaced from that.

This wasn’t a workaround for a bad memory. This was memory working exactly as designed.

Anchor override — when the system purges in real time

And the same system that gives you 3000 alarm codes will make you a terrible eyewitness.

I know this firsthand.

I was driving to one of my objects, stopped at a red light. A man jumped over the hood of my car and ran left toward a bank. I went straight on the radio to report it — high alert, potential armed robbery. The only marker I could give: a black gun in his hand. Not his face. Not his clothes. Not his height, skin colour, shoes, nothing. Just the gun.

It turned out to be a film crew shooting a sequence for the Swedish TV show Efterlyst — the police were parked outside the bank the whole time, cameras rolling, and I hadn’t registered any of it. The alert had simply never reached our radio central.

Thirty years later I still can’t tell you what that man looked like. The gun erased everything else in real time. Not because my training failed. Because the nervous system has a hierarchy — and self-preservation sits above expert witness protocol every single time. Under genuine threat, the system doesn’t just reconstruct from available anchors. It decides which anchors are allowed to form at all. No filing system does that. No database purges non-essential records mid-transaction because it detected danger. But human memory does. Routinely. By design.

Move the pills 10cm

The same architecture has a shadow side.

Move a medication bottle 10cm from its usual spot and it ceases to exist. Not misplaced — gone. Erased from active reality until I physically stumble on it again. The anchor was location. Location shifted. The whole reference collapsed.

No filing system fails like that. A filing system degrades predictably — you lose a file, you know you’ve lost it. Associative memory can be razor sharp in one context and completely absent in another, with no warning, no error message, and no logic that makes sense from the outside.

This is the paradox. The same system that gives you 3000 alarm codes through smell and temperature will lose your medication because someone tidied up. It’s not inconsistency. It’s the architecture being completely consistent — and that architecture being fundamentally different from what most people assume memory is.

What this means for AI

Christopher’s article focuses on AI needing less aggressive recall and more relational presence. The Self-ReCheck solution he describes — a relevance filter that asks “should I really surface this right now” — reduced over-personalisation by 29%. That’s meaningful. But it’s still a filing system with a better sorting mechanism.

What’s actually missing is the anchor layer.

Not “do I have information about this person.” Not even “is this information relevant right now.” But — what is the associative web that makes this information meaningful in context? What mood, what prior exchange, what unspoken pattern does this connect to? And does surfacing it serve the present moment or just demonstrate that I was paying attention?

That’s not a retrieval problem. That’s a relational architecture problem.

There are small signs this is understood, at least partially. Claude’s “skills” system points in the right direction — not a profile to retrieve, but a context web to reconstruct from. Tone, cognitive style, load thresholds, vocabulary, the framework someone thinks in. When it works, you’re not being remembered. You’re being oriented toward. That’s a different operation entirely.

But it’s still the exception, not the architecture. The industry is building better and better filing systems and calling it memory. Meanwhile the thing they’re trying to replicate — human associative memory — was never a filing system. It was always a reconstruction engine, running on anchors most people can’t name and wouldn’t think to measure.

Until we build AI that anchors rather than files, we’re not building memory. We’re building very expensive, very attentive record-keeping — and wondering why it feels wrong.

Christopher Michael writes Relational AI. His piece “Recall vs. Wisdom” is worth your time.

Love this! Makes me wonder, if this shift was made, how would it (or not) impact data center development & use. Particularly over quantity & time.

Imperfect human memory is the feature not a bug. Generative reconstruction via anchors & connections, means the field can dissolve & reform, as many different times/ways as needed. But eventually, that field can fade from the collected/collective memory for good. I think if humans were centered rather than AI, the shared “memory" between us could be generatively evolving & decaying. A new ecosystem, not tech first but life first.

Thank you for this!

This was a facinating read to start my day, thank you!

I experienced what you’re describing in real time minutes before reading. a memory surfaced mid-makeup routine, triggered by context and sensation, not intention. And I recognized it as exactly what you’re writing about.